About Us

Using a combination of two machine learning algorithms VQGAN and CLIP, we are generating artworks based on a description / text prompt. VQGAN allows us to generate an image based on trained data sets, whereas CLIP determines how well the description matches the picture. Using the cloud-based programming environment Google Colab, we fed the following prompts to the algorithm, then modified the variables for a better result. Similarly, to generate images based on text prompts using a dataset of text-image pairs, we utilized DALL-E. Such an algorithm relies on language understanding and context provided by GPT-3 to create a decent image. DALL-E made it easier for us to generate images as inputs are naturally processed, meaning that we can tell the algorithm what to exactly do in the prompt section. We made sure to explore different DALL-E’s capabilities such as combining unrelated concepts, animal illustrations...

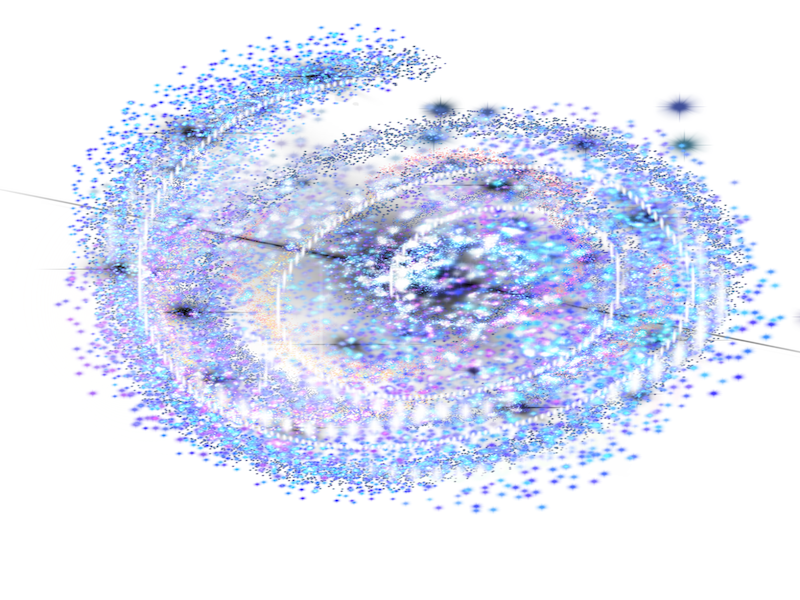

VQGAN

Using a combination of two machine learning algorithms VQGAN and CLIP, we are generating artworks based on a description / text prompt. VQGAN allows us to generate an image based on trained data sets, whereas CLIP determines how well the description matches the picture.

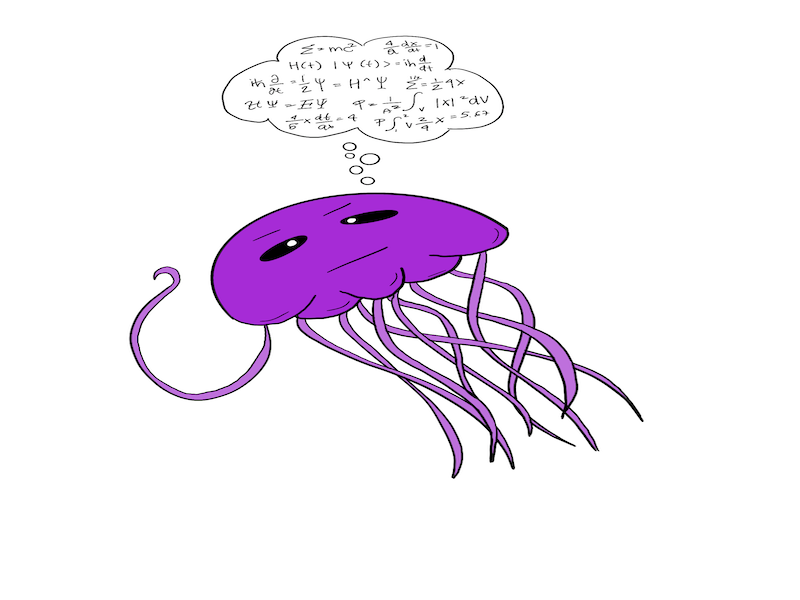

CLIPDRAW

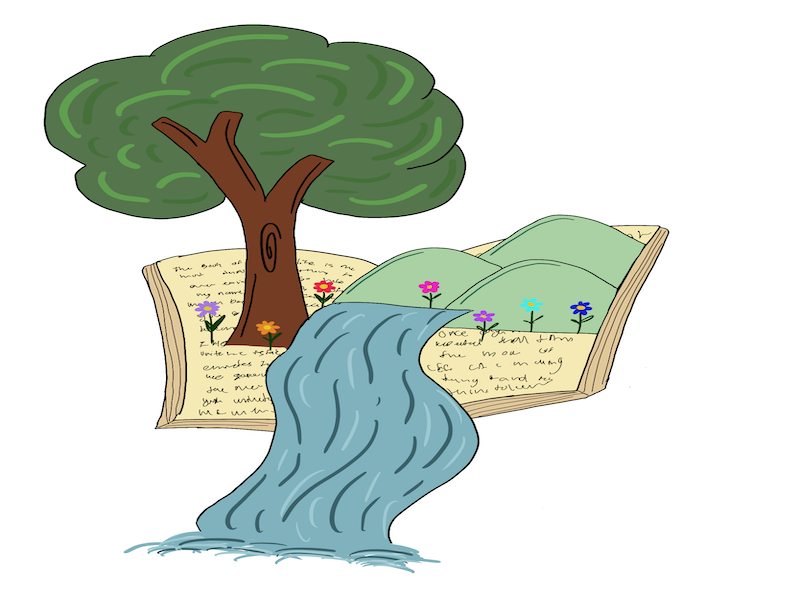

CLIPDraw is a text-to-drawing synthesis algorithm that, contrarily to the previous machine learning algorithms, doesn’t render photorealistic images but opts for simple drawings.

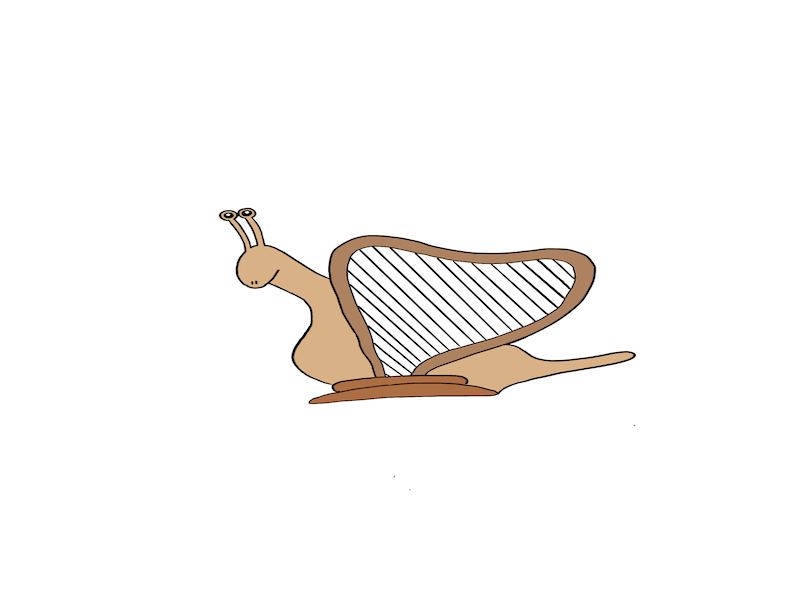

HUMAN DRAWINGS

As our aim is to compare human beings’ drawing skills to AI machines’, we asked a dozen volunteers to sketch whatever comes to mind when reading the text prompt. The participants range from experienced artists to amateurs in order to get diverse results.

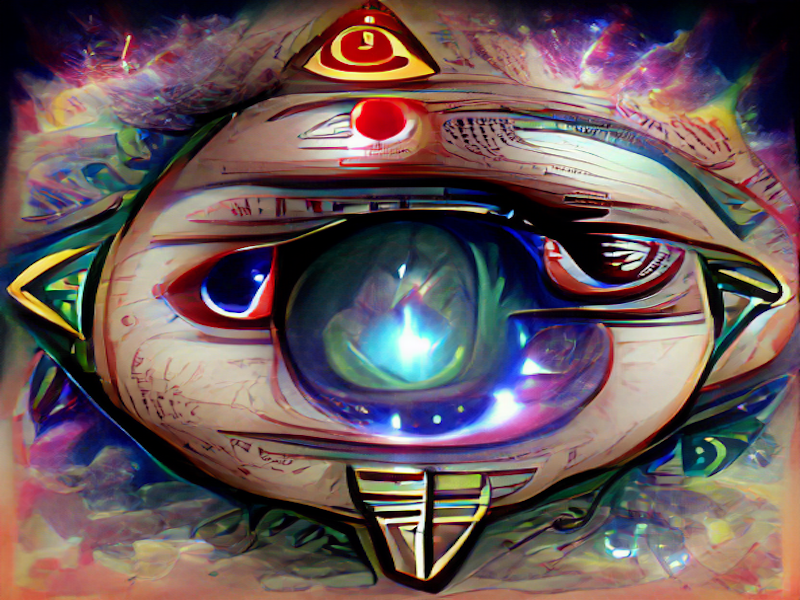

DALL-E

Similarly, to generate images based on text prompts using a dataset of text-image pairs, we utilized DALL-E. Such an algorithm relies on language understanding and context provided by GPT-3 to create a decent image.

Image Comparison

The list of compared images from humans and AI

- All

- Dall-e

- Clip

- VQGAN